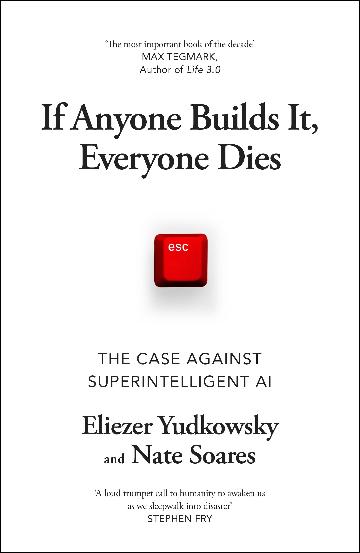

If you haven’t used AI yet, you’re in a minority – it’s coming for us all. To most, that just means it’s going to change the way we work. But big names from Elon Musk to the late Stephen Hawking have warned about Terminator- or Matrix- like dangers of smart computers ‘deciding’ to eradicate humanity (to save the environment, for example), and the authors, the current and former director of an American AI think tank, have joined them.

Yudkowsky and Soares don’t mince words, either in the title or the content of the book. Computer scientists by training, they explain how AI works in the first few chapters – much of which will go over your head if you’re not technologically minded.

But they don’t hold back trying to convince you that when we achieve what’s called ‘general artificial intelligence’ (a step up from just having ChatGPT give you banana bread recipes or reword a clumsily written email), it will mean the end of humankind. It will, they assert in no uncertain terms, decide to destroy us. And when AI has access to military and civil infrastructure, biomedical or other systems where it can just launch nukes or synthesise and release a killer virus, it’ll have the tools to do so.

The book is very alarmist, but opinions on the topic abound and the jury’s still out. When you look deeper, the authors give us plausible reasons for how AI might end the species, but they fail to make a totally convincing argument about why it will. Still, it’s a slightly nerve-wracking read …

Book review by Drew Turney

0 Comments